Business Process & Content Management Consultants

Transform the Way You Work.

Maximize Productivity & Empower Employees with Technology.

What We Do

Our Solutions

What We Do

Enterprise Content Management

Enterprise content management (ECM) is a technology that allows you to gain control of your organization’s critical information.

What We Do

OCR Software

The sooner you get your content and data into your key systems and into the hands of those who need it, the more efficient your employees and overall processes will be.

What We Do

Robotic Process Automation

Robotic Process Automation (RPA) software drives business efficiency and accuracy by unburdening your staff with repetitive work through a digital workforce.

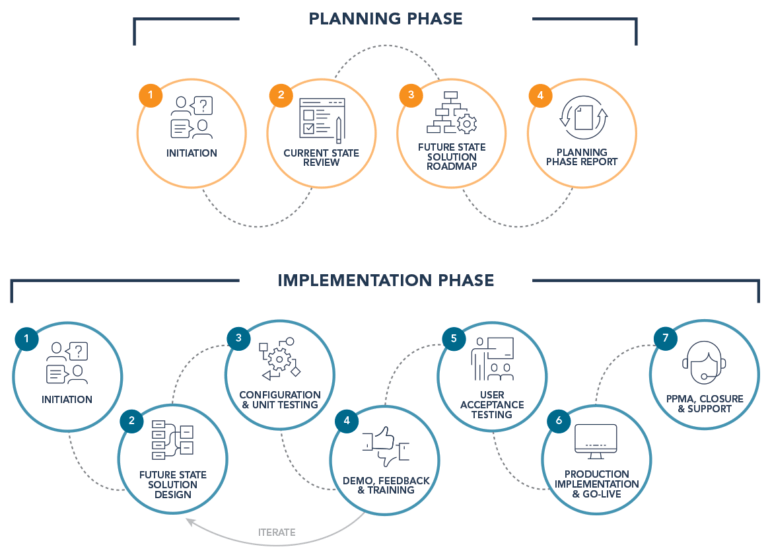

A Process-First Approach

Our process-first approach brings focus to the importance of process improvement prior to implementing best-in-class solutions.

Learn About Our Process

Learn About Our Process

Gartner & Forrester Industry-Leading Solutions

Our Software

A single content services platform for managing content, processes, and cases that combines ECM, case management, BPM, records management, compliance, and capture capabilities on a single platform.

Data capture platform that accurately sorts structured and unstructured documents paper and electronic documents and seamlessly passes content to your core business applications.

ABBYY Vantage is an Intelligent Document Processing platform that uses artificial intelligence (AI) to capture, extract, and process data embedded in documents of any type and structure.

ABBYY Timeline is a process intelligence platform that can pinpoint inefficiencies and give you a better view of your business processes.

The Content Portal, powered by Jadu, is a fully integrated web content management portal solution that enhances your operational efficiency and supercharges your digital transformation.

Invoice Cloud provides a complete, secure electronic bill presentment and payment (EBPP) solution that streamlines the payment process and enhances payment capabilities.

Hyland’s Alfresco platform offers open, cloud-native content management solutions to connect, manage and protect your enterprise’s most important information, wherever it lives.

The Nuxeo Platform is a cloud-native highly scalable asset management solution that accelerate speed to market and improves processes, collaboration and the user experience.

A strong implementation partner is critical to a project’s success. Naviant has served in this role for us over a number of years and we have always been extremely pleased with their subject matter expertise, professionalism and ability to execute within agreed time frames and budgets. Would highly recommend."

I worked directly with Naviant in implementing Finance Workflow (OnBase) for The Master Lock Company LLC. Their attention to detail, immediate responses and software knowledge made the implementation seamless. I am now working to further our relationship by incorporating the software throughout other departments of our company."

Our organization has been a customer of Naviant for over ten years. We have purchased hardware, software, training and consulting services from Naviant to implement our OnBase document management solution throughout the Oneida Nation. I have had experience with all areas of Naviant from sales to support to administration to senior management. My experience has been one of working with a very responsive organization that has provided high value to Oneida. Without reservation, I can highly recommend the Naviant organization for all your document management needs."

I have worked with Naviant over the past couple of years and have found their expertise with assisting us with OnBase for our document management and workflow processing to be excellent."

I am going on 6 years in association with Naviant. In my massive amount of years working with Vendors/Vendor support, Naviant is always there for us! They’ve got staff that I swear work 25 hour days (yes, I said 25 hour days). A great example of this just occurred last week when we upgraded OnBase and EPIC concurrently. Kathy Hughes stayed by our side (remotely) and she refused to leave us until we knew everything was working to perfection. No other Vendor Support has ever provided such excellent support and general caring for our needs. I’m also amazed that when it comes to adding more functionality to OnBase, Naviant Reps don’t try to have us add more than what we need. They want us to succeed without financially draining our IT budget. Thanks Naviant!"

We have worked with Naviant for the last 8+ years after implementing OnBase for document retention and business process improvement. Naviant has been a great partner over the years and their staff is very knowledgeable in workflow and process improvement. Questions are answered in a timely fashion and they have a high degree of professionalism. We lean heavily on their Professional Services Group for support and we are always impressed by their strong business sense and depth of technical expertise."

Naviant, Inc. is a great company to partner with on enterprise content management. Their staff is knowledgeable, courteous and helps the customer achieve their goals. Staff is always pleasant and truly gives you the feeling they enjoy working for this company and what they do."

How Upskilling and Reskilling Drive Digital Transformation Success

OnBase Log License Usage Best Practice Recommendations

Interested to see what

ECM can do for your company?

We’re ready to help!